In-Context Learning (ICL): How Modern LLMs Learn Without Retraining

In-Context Learning (ICL): How Modern LLMs Learn Without Retraining

In-Context Learning (ICL): How Modern LLMs Learn Without Retraining

Large Language Models (LLMs) have transformed how we build intelligent systems. One of the most powerful capabilities behind their flexibility is In-Context Learning (ICL). Instead of retraining the model every time we want it to perform a new task, we can guide it using examples directly in the prompt.

What is In-Context Learning?

In-Context Learning refers to the ability of a language model to learn patterns from examples provided within the prompt itself, without updating the model’s parameters.

This means the model adapts its responses based on the examples you provide in the same input context.

For AI engineers, this is powerful because it allows rapid experimentation and task adaptation without expensive training pipelines.

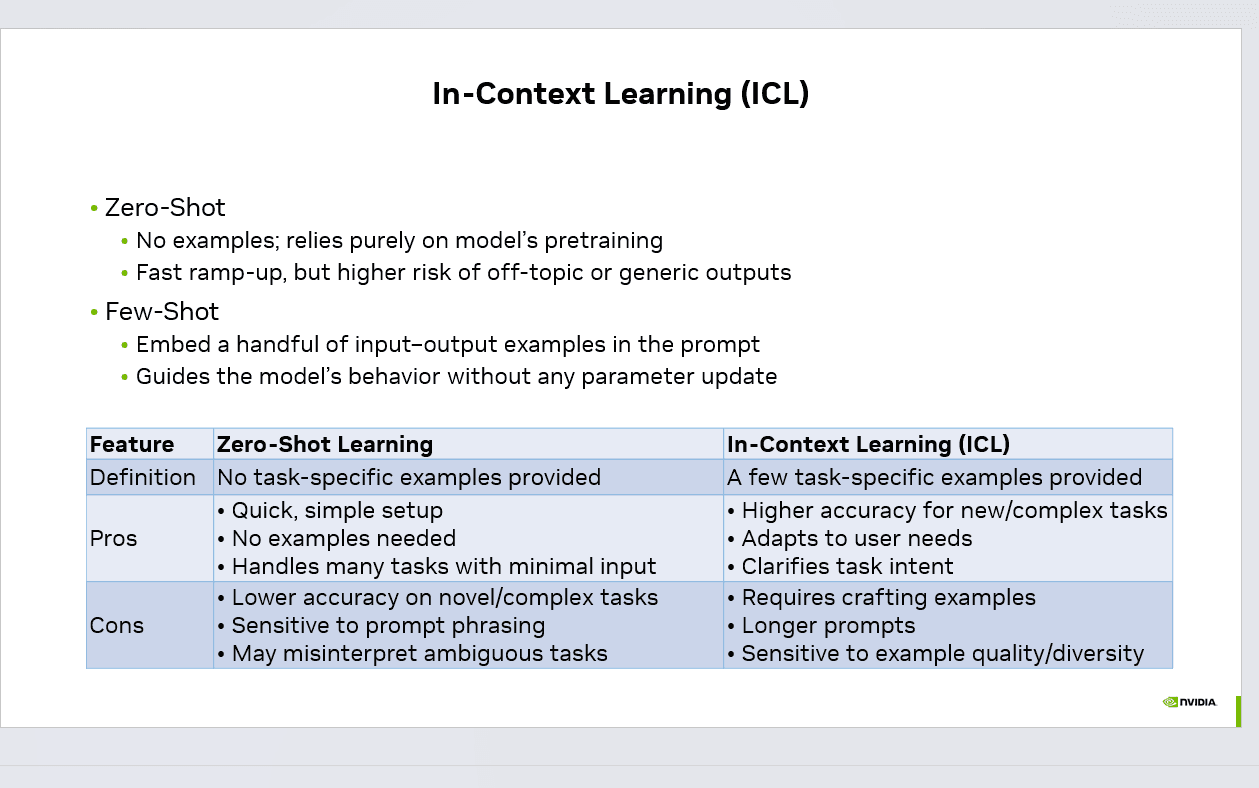

Zero-Shot Learning

Zero-shot learning is the simplest way to use an LLM.

Here, no examples are provided. The model relies entirely on its pretraining knowledge to understand and respond to the task.

Example:

Text: "This product is amazing."

Task: Classify sentiment

Advantages:

Quick and simple setup

No examples needed

Works well for common tasks

Limitations:

Lower accuracy for complex tasks

Sensitive to prompt wording

May produce generic outputs

Few-Shot Learning

Few-shot learning improves performance by adding a few input-output examples in the prompt. These examples guide the model on how to behave.

Example:

Text: I love this movie

Sentiment: Positive

Text: This is the worst service ever

Sentiment: Negative

See AI Voice Agents Handle Real Calls

Book a free demo or calculate how much you can save with AI voice automation.

Text: The product quality is great

Sentiment:

Advantages:

Higher accuracy for complex tasks

Helps clarify task intent

Enables customized outputs

Limitations:

Requires carefully crafted examples

Longer prompts

Output quality depends on example diversity

Why In-Context Learning Matters

ICL is one of the main reasons LLMs are so versatile. Instead of training a new model for every task, developers can simply guide the model with examples inside the prompt.

This enables:

Rapid prototyping of AI applications

Faster experimentation in prompt engineering

Reduced need for costly fine-tuning

Flexible task adaptation across domains

Many modern systems such as AI agents, chatbots, document analysis tools, and coding assistants rely heavily on in-context learning to improve response quality.

When to Use Each Approach

Use Zero-Shot when:

The task is simple

The model already understands the domain

Speed and simplicity matter

Use Few-Shot / In-Context Learning when:

The task is complex or ambiguous

You need structured outputs

You want more consistent responses

Final Thoughts

In-Context Learning is one of the most practical techniques in modern AI development. With just a few well-designed examples, we can guide powerful models to perform new tasks without retraining.

As LLM capabilities continue to evolve, prompt design and example selection will remain critical skills for AI engineers building real-world intelligent systems.

What are some interesting ways you’ve used in-context learning in your projects?

#AI #LLM #MachineLearning #PromptEngineering #GenerativeAI #ArtificialIntelligence

Admin

Expert insights on AI voice agents and customer communication automation.

Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.