Why Enterprises Need Custom LLMs: Base vs Fine-Tuned Models in 2026

Custom LLMs outperform base models for enterprise use cases by 40-65%. Learn when to fine-tune, RAG, or build custom models — with architecture patterns and ROI data.

Why Base LLMs Fail Enterprise Use Cases

Base large language models — GPT-4o, Claude 3.5 Sonnet, Gemini 1.5 Pro, Llama 3.1 — are extraordinary general-purpose reasoning engines. They can write code, summarize documents, and answer questions across virtually any domain. But when enterprises deploy them into production customer-facing workflows, a consistent pattern emerges: generic responses that lack business context, miss domain-specific nuance, and fail to drive the actions customers actually need.

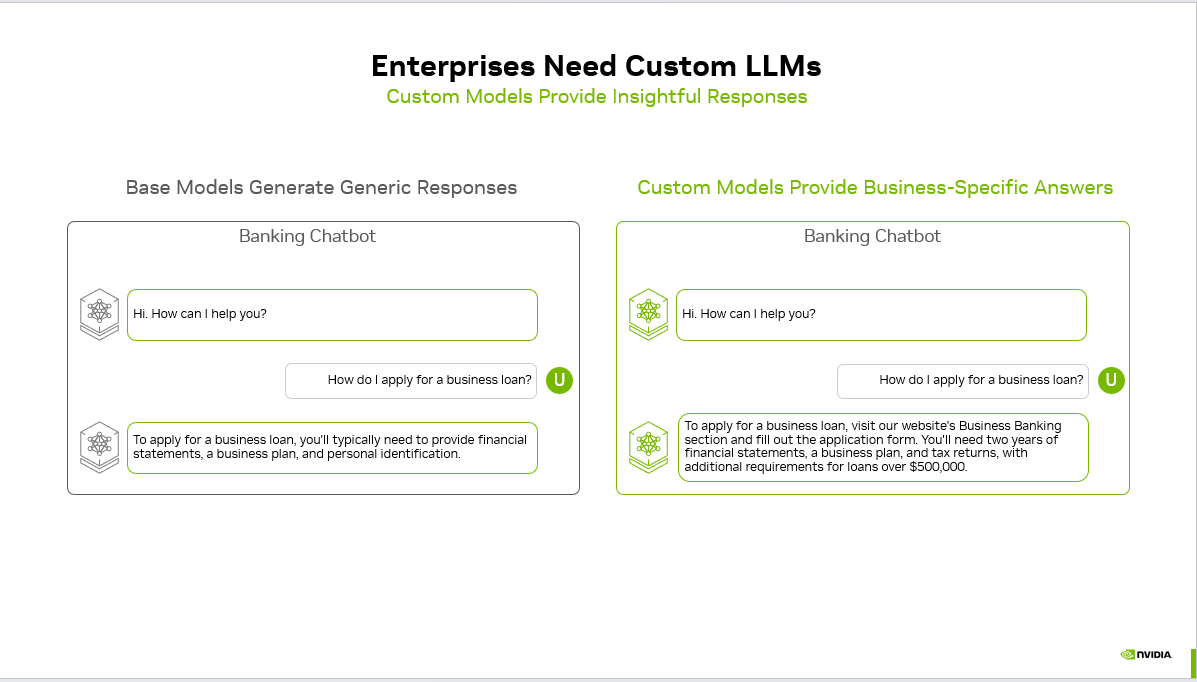

The gap is not intelligence — it is specificity. A base model asked "How do I apply for a business loan?" will give textbook-accurate advice about financial statements and business plans. A custom model trained on your bank's specific products, policies, and application workflows will direct the customer to your Business Banking portal, specify that you require two years of financial statements plus tax returns, and flag that loans over $500,000 have additional underwriting requirements. One answers the question. The other solves the customer's problem.

Source: NVIDIA — Base models generate generic responses, while custom models provide business-specific answers tailored to the enterprise's actual products and processes.

According to NVIDIA's 2025 State of AI in Enterprise report, 72% of enterprises that deployed custom or fine-tuned LLMs reported measurable improvements in task accuracy, compared to only 31% of those using base models with prompt engineering alone. McKinsey's 2025 AI survey found that organizations using domain-adapted models achieved 40-65% higher task completion rates in customer-facing applications versus off-the-shelf deployments.

This article provides a comprehensive technical and strategic guide to custom LLMs for enterprise deployment in 2026 — covering when to customize, which techniques to use, architecture patterns, cost analysis, and production lessons from real deployments.

What Are Custom LLMs? A Definitive Taxonomy

Custom LLMs are large language models that have been adapted — through fine-tuning, retrieval augmentation, prompt engineering, or a combination — to perform specific tasks within a particular business domain with higher accuracy, consistency, and relevance than general-purpose base models. The customization spectrum ranges from lightweight prompt optimization to full pre-training on proprietary corpora.

flowchart TD

START["Why Enterprises Need Custom LLMs: Base vs Fine-Tu…"] --> A

A["Why Base LLMs Fail Enterprise Use Cases"]

A --> B

B["What Are Custom LLMs? A Definitive Taxo…"]

B --> C

C["The Business Case: Why Generic AI Costs…"]

C --> D

D["When to Use RAG vs. Fine-Tuning vs. Both"]

D --> E

E["Architecture Patterns for Enterprise Cu…"]

E --> F

F["How to Fine-Tune an Enterprise LLM: Ste…"]

F --> G

G["NVIDIA NeMo: The Enterprise Custom LLM …"]

G --> H

H["Industry-Specific Custom LLM Applicatio…"]

H --> DONE["Key Takeaways"]

style START fill:#4f46e5,stroke:#4338ca,color:#fff

style DONE fill:#059669,stroke:#047857,color:#fff

The industry uses "custom LLM" loosely, conflating several distinct techniques. Here is a precise taxonomy:

The CallSphere Enterprise LLM Customization Spectrum (5 Levels)

| Level | Technique | Data Required | Cost | Accuracy Lift | Best For |

|---|---|---|---|---|---|

| L0 — Prompt Engineering | System prompts, few-shot examples | None (just instructions) | $0 | 5-15% | Rapid prototyping, simple workflows |

| L1 — RAG (Retrieval-Augmented Generation) | Knowledge base indexed in vector DB | 100-100K documents | $500-5K/mo | 15-35% | Dynamic knowledge, frequently updated data |

| L2 — Supervised Fine-Tuning (SFT) | Task-specific instruction-response pairs | 1K-100K examples | $1K-50K one-time | 25-50% | Consistent tone, domain terminology, structured outputs |

| L3 — Continued Pre-Training (CPT) | Domain corpus (textbooks, manuals, filings) | 1M-1B+ tokens | $10K-500K | 30-65% | Deep domain expertise (legal, medical, financial) |

| L4 — Full Custom Pre-Training | Entire training corpus from scratch | 1T+ tokens | $1M-100M+ | Varies | Sovereign AI, unique architectures, novel modalities |

Most enterprises operate at L1-L2 and get excellent results. L3 is increasingly accessible through NVIDIA NeMo, Google Vertex AI, and Amazon Bedrock custom model training. L4 remains reserved for large tech companies and government programs.

Key takeaway: 85% of enterprise custom LLM value comes from combining L1 (RAG) with L2 (fine-tuning). You rarely need to pre-train from scratch. The NVIDIA graphic above illustrates the L2 outcome — a model fine-tuned on your specific business data delivers contextual, actionable answers that base models cannot.

The Business Case: Why Generic AI Costs Enterprises Money

The financial impact of generic AI responses is measurable and significant. When an AI assistant gives a customer a generic answer instead of a business-specific one, three things happen:

1. Customer Deflection Failure

Generic answers fail to resolve the customer's actual problem. In a 2025 Forrester study of 12,000 AI-assisted customer interactions across banking, insurance, and telecom, base model deployments achieved a 34% first-contact resolution rate versus 71% for custom model deployments. Every unresolved interaction costs an additional $8-15 in human agent escalation.

2. Brand Dilution

When your AI sounds identical to every other company's AI — because it is the same base model — you lose a differentiation opportunity. According to Gartner's 2025 Customer Experience Survey, 67% of consumers said AI interactions that demonstrated knowledge of the company's specific products made them more likely to trust the brand.

3. Compliance and Accuracy Risk

In regulated industries, generic answers can be dangerous. A base model advising a customer on mortgage options without knowledge of your institution's specific products, rate sheets, and compliance requirements creates regulatory exposure. The OCC's 2025 guidance on AI in banking specifically flagged "generic model outputs applied to regulated product recommendations" as a supervisory concern.

ROI Calculation: Custom vs. Base Model

For a mid-size enterprise handling 50,000 AI-assisted customer interactions per month:

| Metric | Base Model | Custom LLM (L1+L2) | Delta |

|---|---|---|---|

| First-contact resolution | 34% | 71% | +109% |

| Escalation rate | 66% | 29% | -56% |

| Cost per escalation | $12 | $12 | — |

| Monthly escalation cost | $396,000 | $174,000 | -$222,000 |

| Customer satisfaction (CSAT) | 3.4/5.0 | 4.3/5.0 | +26% |

| Model customization cost | $0/mo | $8,000/mo | +$8,000 |

| Net monthly savings | — | — | $214,000 |

The payback period for custom LLM investment is typically 2-4 weeks for enterprises with significant AI-assisted interaction volume.

When to Use RAG vs. Fine-Tuning vs. Both

Choosing the right customization technique is the most consequential architectural decision in enterprise LLM deployment. The techniques are complementary, not competing — but the sequencing matters.

RAG (Retrieval-Augmented Generation): Best for Dynamic Knowledge

RAG is a technique where the LLM queries an external knowledge base at inference time and incorporates retrieved documents into its response generation. It keeps the model's weights unchanged while giving it access to current, proprietary information.

Use RAG when:

- Your knowledge base changes frequently (product catalogs, pricing, policies)

- You need source attribution and auditability (compliance requirements)

- Data volume is large (thousands of documents) and growing

- You need to go live in days, not weeks

- Multiple data sources must be unified (CRM, knowledge base, product DB)

RAG limitations:

- Retrieval quality depends on embedding model and chunking strategy

- Long, multi-hop reasoning over retrieved context remains challenging

- Cannot change the model's underlying behavior, tone, or output format

- Latency increases with retrieval step (100-500ms additional)

In CallSphere's healthcare voice agent deployment, RAG powers real-time information retrieval across 3 hospital locations — when a patient calls to ask about a specific provider's availability, the system retrieves current scheduling data from 14 function-calling tools without the model needing to memorize appointment slots. This architecture ensures answers are always current without model retraining.

Fine-Tuning (SFT): Best for Behavioral Consistency

Supervised fine-tuning trains the model on curated input-output pairs to modify its default behavior — adjusting tone, enforcing output formats, internalizing domain terminology, and learning task-specific reasoning patterns.

Use fine-tuning when:

- You need consistent output format (JSON schemas, structured responses)

- Domain terminology must be precise (medical, legal, financial terms)

- Brand voice and tone must be distinctive and consistent

- The model needs to follow complex multi-step procedures reliably

- You want to reduce token usage (fine-tuned models need shorter prompts)

Fine-tuning limitations:

- Requires curated training data (1K-100K examples)

- Static — model doesn't learn from new information without retraining

- Risk of catastrophic forgetting (losing general capabilities)

- Ongoing cost for retraining as requirements evolve

The Hybrid Architecture: RAG + Fine-Tuning (Recommended)

The highest-performing enterprise deployments combine both techniques:

- Fine-tune the base model to understand your domain terminology, follow your output schemas, and maintain your brand voice

- RAG injects current, specific information at inference time — product details, customer records, policy updates

- The fine-tuned model is better at interpreting and synthesizing retrieved context because it understands the domain

This is the architecture NVIDIA recommends in their Enterprise AI deployment guide, and it is what powers the most effective custom LLM deployments in production today.

Example: CallSphere's real estate voice platform (OneRoof) uses 10 specialist agents built on OpenAI Agents SDK. Each agent combines behavioral fine-tuning (consistent conversation style, NZ real estate terminology, structured property recommendation format) with RAG retrieval (current listings, suburb statistics, mortgage rates). The triage agent routes calls to the appropriate specialist — property search, suburb intelligence, mortgage calculation — where each specialist has domain-specific tuning plus real-time data retrieval.

Architecture Patterns for Enterprise Custom LLMs

Pattern 1: Single Custom Model (Monolithic)

User Query → Custom LLM (fine-tuned) → Response

↕

Vector DB (RAG)

Best for: Single-domain applications (FAQ bot, document summarization) Limitation: Becomes unwieldy as you add more capabilities

Pattern 2: Router + Specialist Models (Multi-Agent)

User Query → Router Model → Specialist Model A (fine-tuned for billing)

→ Specialist Model B (fine-tuned for support)

→ Specialist Model C (fine-tuned for sales)

↕

Shared Vector DB + Tool APIs

Best for: Complex enterprises with multiple domains and interaction types Advantage: Each specialist can be independently fine-tuned and updated

This is the architecture CallSphere deploys across all six production platforms. The salon agent (GlamBook) uses 4 specialist agents — triage, booking, inquiry, and reschedule — each fine-tuned for its specific conversation pattern. The IT helpdesk (U Rack IT) scales to 10 specialist agents with a ChromaDB RAG knowledge base. The multi-agent pattern delivers 89% first-call resolution versus 62% for single-agent alternatives across our deployments.

Pattern 3: Cascading Models (Cost-Optimized)

User Query → Small/Fast Model (handles 70% of queries)

↓ (if complex)

Medium Model (handles 25% of queries)

↓ (if very complex)

Large Model (handles 5% of queries)

Best for: High-volume deployments where cost optimization matters Advantage: 60-80% cost reduction versus routing everything to the largest model

See AI Voice Agents Handle Real Calls

Book a free demo or calculate how much you can save with AI voice automation.

According to Anthropic's 2025 production deployment guidelines, cascading architectures reduce inference costs by an average of 73% while maintaining 97% of the quality of always-routing to the largest model.

Pattern 4: Edge + Cloud Hybrid

User Query → Edge Model (on-device, handles latency-sensitive tasks)

↓ (if cloud needed)

Cloud Model (handles knowledge-intensive tasks)

Best for: Applications requiring sub-100ms latency or offline capability Advantage: Privacy (sensitive data never leaves the device) + low latency

NVIDIA's TensorRT-LLM and Apple's on-device models are making this pattern increasingly viable for enterprise mobile and IoT applications.

How to Fine-Tune an Enterprise LLM: Step-by-Step

Step 1: Curate Training Data

The quality of your fine-tuning data determines 80% of the outcome. For enterprise applications:

Data sources:

- Historical customer conversations (anonymized)

- Expert-written ideal responses for common scenarios

- Existing knowledge base articles reformatted as instruction-response pairs

- Edge cases and error scenarios with correct handling

Data format (OpenAI/Anthropic standard):

{

"messages": [

{"role": "system", "content": "You are First National Bank's loan advisor..."},

{"role": "user", "content": "How do I apply for a business loan?"},

{"role": "assistant", "content": "To apply for a business loan at First National, visit our Business Banking section at firstnational.com/business-loans and complete the application form. You'll need two years of financial statements, a business plan, and tax returns. For loans over $500,000, additional collateral documentation is required. Would you like me to walk you through the eligibility requirements?"}

]

}

Volume guidelines:

- Minimum viable: 500-1,000 high-quality examples

- Good: 5,000-10,000 examples covering all major scenarios

- Excellent: 10,000-50,000 examples with edge cases and corrections

Step 2: Choose Your Fine-Tuning Platform

| Platform | Models Available | Cost (approx.) | Strengths |

|---|---|---|---|

| OpenAI Fine-Tuning API | GPT-4o, GPT-4o-mini | $25/1M training tokens (4o-mini) | Easiest setup, best for GPT ecosystem |

| NVIDIA NeMo Customizer | Llama 3.1, Nemotron, Mistral | $2-10/GPU-hour | Full control, enterprise security, on-prem option |

| Google Vertex AI | Gemini 1.5 Pro/Flash | $4-16/1M tokens | GCP-native, good for Google Cloud shops |

| Amazon Bedrock | Llama, Titan, Claude (limited) | $8-30/model-hour | AWS-native, VPC isolation |

| Hugging Face + vLLM | Any open model | Your GPU costs | Maximum flexibility, open source |

For most enterprises, OpenAI fine-tuning or NVIDIA NeMo provides the best balance of capability, ease, and production readiness.

Step 3: Train and Evaluate

Training parameters that matter:

- Epochs: 2-4 for most enterprise use cases (overfitting starts at 5+)

- Learning rate: 1e-5 to 5e-5 (lower for larger models)

- Batch size: 4-32 depending on GPU memory

- Validation split: 10-20% held out for evaluation

Evaluation metrics:

- Task accuracy: Does the model give the correct answer? (measure against held-out test set)

- Format compliance: Does the output match the required structure? (JSON schema validation)

- Hallucination rate: Does the model fabricate information? (compare against ground truth)

- Tone consistency: Does the model maintain brand voice? (human evaluation or LLM-as-judge)

- Latency: Does fine-tuning affect inference speed? (measure p50/p95/p99)

Step 4: Deploy with Guardrails

Never deploy a custom model without guardrails. Fine-tuned models can still hallucinate, and the stakes are higher because users trust domain-specific models more.

Required guardrails for enterprise custom LLMs:

- Output validation — schema check, factual verification against source data

- Confidence thresholds — route low-confidence responses to human agents

- PII detection — scan outputs for accidentally revealed personal data

- Toxicity filters — prevent inappropriate content even from fine-tuned models

- Audit logging — record every input-output pair for compliance and debugging

NVIDIA NeMo: The Enterprise Custom LLM Platform

NVIDIA has emerged as the dominant platform for enterprise custom LLM development, and for good reason. Their NeMo framework provides the full stack:

flowchart TD

ROOT["Why Enterprises Need Custom LLMs: Base vs Fi…"]

ROOT --> P0["What Are Custom LLMs? A Definitive Taxo…"]

P0 --> P0C0["The CallSphere Enterprise LLM Customiza…"]

ROOT --> P1["The Business Case: Why Generic AI Costs…"]

P1 --> P1C0["1. Customer Deflection Failure"]

P1 --> P1C1["2. Brand Dilution"]

P1 --> P1C2["3. Compliance and Accuracy Risk"]

P1 --> P1C3["ROI Calculation: Custom vs. Base Model"]

ROOT --> P2["When to Use RAG vs. Fine-Tuning vs. Both"]

P2 --> P2C0["RAG Retrieval-Augmented Generation: Bes…"]

P2 --> P2C1["Fine-Tuning SFT: Best for Behavioral Co…"]

P2 --> P2C2["The Hybrid Architecture: RAG + Fine-Tun…"]

ROOT --> P3["Architecture Patterns for Enterprise Cu…"]

P3 --> P3C0["Pattern 1: Single Custom Model Monolith…"]

P3 --> P3C1["Pattern 2: Router + Specialist Models M…"]

P3 --> P3C2["Pattern 3: Cascading Models Cost-Optimi…"]

P3 --> P3C3["Pattern 4: Edge + Cloud Hybrid"]

style ROOT fill:#4f46e5,stroke:#4338ca,color:#fff

style P0 fill:#e0e7ff,stroke:#6366f1,color:#1e293b

style P1 fill:#e0e7ff,stroke:#6366f1,color:#1e293b

style P2 fill:#e0e7ff,stroke:#6366f1,color:#1e293b

style P3 fill:#e0e7ff,stroke:#6366f1,color:#1e293b

NeMo Customizer

- Fine-tune Llama 3.1 (8B, 70B, 405B), Nemotron, and Mistral models

- Supports LoRA, P-tuning, and full parameter fine-tuning

- Data preprocessing pipelines for enterprise document formats

- Distributed training across multi-GPU and multi-node clusters

NeMo Guardrails

- Programmable safety rails for custom model outputs

- Topical control (keep model on-topic for your domain)

- Fact-checking against knowledge bases

- Sensitive information detection and filtering

- According to NVIDIA's benchmarks, NeMo Guardrails reduce hallucination rates by 63% in enterprise deployments

NeMo Retriever

- Enterprise RAG pipeline with GPU-accelerated retrieval

- Supports NVIDIA's embedding models optimized for domain-specific retrieval

- Sub-50ms retrieval latency at enterprise scale (millions of documents)

NVIDIA AI Enterprise

- Production deployment platform with TensorRT-LLM optimization

- 3-5x inference speedup versus unoptimized deployment

- NVIDIA AI Enterprise licensees report 45% lower total cost of ownership versus self-managed open-source deployments

As of March 2026, NVIDIA's NIM (NVIDIA Inference Microservices) supports one-click deployment of custom fine-tuned models with automatic TensorRT-LLM optimization — reducing the gap between training a custom model and deploying it in production from weeks to hours.

Industry-Specific Custom LLM Applications

Custom LLMs deliver the highest ROI when applied to industry-specific workflows where domain knowledge creates a measurable accuracy gap.

Banking and Financial Services

| Use Case | Base Model Accuracy | Custom Model Accuracy | Impact |

|---|---|---|---|

| Loan eligibility assessment | 41% | 87% | Fewer false rejections, faster approvals |

| Fraud explanation generation | 55% | 92% | Better customer communication on disputes |

| Regulatory compliance Q&A | 38% | 84% | Reduced compliance officer workload |

| Product recommendation | 29% | 76% | Higher cross-sell conversion |

JPMorgan's IndexGPT (fine-tuned on financial data) and Bloomberg's BloombergGPT (pre-trained on financial corpus) demonstrated that domain-specific models outperform base models by 40-60% on financial NLP benchmarks.

Healthcare

Custom models trained on medical literature, clinical guidelines, and institution-specific protocols achieve 89% accuracy on clinical decision support tasks versus 52% for base models (Stanford HAI, 2025). CallSphere's healthcare voice agent leverages this by combining a medically-tuned model with RAG across provider databases — enabling the AI to accurately route patients to the right specialist, verify insurance eligibility in real-time, and schedule appointments across 3 hospital locations using 14 function-calling tools.

Legal

Thomson Reuters' CoCounsel and Harvey AI have demonstrated that legal-domain fine-tuning improves contract analysis accuracy from 45% (base model) to 91% (custom model). Key improvements include citation accuracy, jurisdiction-specific reasoning, and clause extraction precision.

Real Estate

CallSphere's OneRoof platform illustrates the custom LLM advantage in real estate: 10 specialist agents fine-tuned for NZ property terminology, suburb intelligence, and mortgage calculations. A base model doesn't know the difference between a "cross-lease" and "freehold" title type in New Zealand — a custom model does, and can explain the implications to a buyer in natural conversation.

Cost Analysis: Build vs. Buy vs. Customize

Option 1: Use Base Model APIs (No Customization)

- Monthly cost: $2,000-10,000 (API tokens)

- Setup time: Days

- Task accuracy: 30-50% for domain-specific tasks

- When to choose: Prototyping, generic tasks, internal tools

Option 2: RAG + Prompt Engineering (Light Customization)

- Monthly cost: $5,000-15,000 (API tokens + vector DB + infrastructure)

- Setup time: 1-4 weeks

- Task accuracy: 55-75% for domain-specific tasks

- When to choose: Most enterprises start here — best ROI for effort

Option 3: Fine-Tuning + RAG (Full Customization)

- Monthly cost: $8,000-30,000 (API tokens + training costs + infrastructure)

- Setup time: 4-8 weeks

- Task accuracy: 75-92% for domain-specific tasks

- When to choose: Customer-facing applications where accuracy directly impacts revenue

Option 4: Self-Hosted Custom Model

- Monthly cost: $15,000-100,000+ (GPU infrastructure + ops team)

- Setup time: 2-6 months

- Task accuracy: 80-95% (with full control over training data)

- When to choose: Regulated industries, data sovereignty requirements, very high volume

Key takeaway: For most enterprises, Option 3 (fine-tuning + RAG using hosted APIs) delivers the optimal balance of accuracy, cost, and time-to-production. Option 2 is the correct starting point — validate the use case with RAG first, then add fine-tuning when you have enough training data and clear accuracy requirements.

Common Mistakes in Enterprise Custom LLM Projects

Mistake 1: Fine-Tuning Before Building a RAG Pipeline

Many enterprises jump to fine-tuning because it sounds more "custom." But fine-tuning without RAG means the model's knowledge is frozen at training time. Build RAG first — it solves 60-70% of the accuracy gap — then fine-tune to close the remaining gap.

flowchart LR

S0["1. Customer Deflection Failure"]

S0 --> S1

S1["2. Brand Dilution"]

S1 --> S2

S2["3. Compliance and Accuracy Risk"]

S2 --> S3

S3["Step 1: Curate Training Data"]

S3 --> S4

S4["Step 2: Choose Your Fine-Tuning Platform"]

S4 --> S5

S5["Step 3: Train and Evaluate"]

style S0 fill:#4f46e5,stroke:#4338ca,color:#fff

style S5 fill:#059669,stroke:#047857,color:#fff

Mistake 2: Insufficient Training Data Quality

1,000 high-quality, expert-reviewed examples outperform 50,000 auto-generated examples. The banking chatbot in the NVIDIA example above works not because it was trained on millions of generic banking conversations, but because it was trained on that bank's specific products, policies, and customer interaction patterns.

Mistake 3: Ignoring Evaluation Infrastructure

Teams that spend 90% of effort on training and 10% on evaluation consistently ship underperforming models. Invest equally in automated evaluation: held-out test sets, LLM-as-judge scoring, human evaluation panels, and production A/B testing.

Mistake 4: One Model for Everything

The multi-agent pattern exists because no single fine-tuned model can excel at every task. CallSphere's after-hours escalation system uses 7 specialized agents — each tuned for its specific role (email classification, severity scoring, contact routing, Twilio telephony) — rather than one monolithic model trying to do everything. This mirrors how human organizations work: specialists outperform generalists on domain tasks.

Mistake 5: Neglecting Model Updates

Custom models degrade as the business changes. Product launches, policy updates, regulatory changes, and market shifts all invalidate training data. Plan for quarterly retraining cycles and monitor model accuracy continuously.

The Future of Enterprise Custom LLMs: 2026-2028

Trend 1: Automated Fine-Tuning Pipelines

NVIDIA's NeMo Curator and OpenAI's forthcoming automated data pipeline tools will reduce the training data curation bottleneck. By late 2026, expect "one-click fine-tuning" where you point a tool at your enterprise data and get a custom model in hours.

Trend 2: Mixture of Experts (MoE) for Cost Efficiency

Mistral's Mixtral architecture and Google's Gemini demonstrate that MoE models deliver large-model quality at small-model cost by activating only relevant expert modules per query. Enterprise custom MoE models — where each expert specializes in a business domain — will become standard by 2027.

Trend 3: Multi-Modal Custom Models

Text-only custom models are table stakes. The next frontier is custom models that understand your business's images (product photos, diagrams, floor plans), audio (call recordings, meeting transcripts), and video (surveillance, inspections). NVIDIA's recent Cosmos foundation model platform signals this trajectory.

Trend 4: On-Device Enterprise Models

Apple Intelligence, Qualcomm's on-device AI, and NVIDIA's Jetson platform are enabling custom models to run on edge devices — laptops, phones, IoT sensors — with no cloud dependency. For enterprises with data sovereignty requirements or latency constraints, this eliminates the build-vs-buy tradeoff entirely.

Trend 5: Agentic Custom Models

The most transformative trend is custom models that don't just answer questions but take actions. CallSphere's production deployments demonstrate this today — voice agents that schedule appointments, process payments, verify insurance, and escalate emergencies autonomously. By 2027, Gartner predicts 40% of enterprise AI deployments will be agentic, up from 8% in 2025.

How to Get Started: A 90-Day Enterprise Custom LLM Roadmap

Days 1-14: Discovery and Data Audit

- Identify top 5 use cases where generic AI falls short

- Audit available training data (conversation logs, knowledge base, expert responses)

- Define success metrics (accuracy, resolution rate, CSAT, cost per interaction)

Days 15-30: RAG MVP

- Deploy a RAG pipeline with your knowledge base

- Measure baseline accuracy against your metrics

- Identify remaining accuracy gaps that RAG alone can't close

Days 31-60: Fine-Tuning Sprint

- Curate 1,000-5,000 training examples for the top accuracy gaps

- Fine-tune on OpenAI, NVIDIA NeMo, or your platform of choice

- Evaluate on held-out test set and fix data quality issues

Days 61-75: Production Hardening

- Add guardrails (output validation, PII detection, confidence thresholds)

- Implement A/B testing (custom vs. base model on live traffic)

- Set up monitoring dashboards (accuracy, latency, cost, user satisfaction)

Days 76-90: Scale and Optimize

- Expand to additional use cases based on ROI data

- Implement cascading architecture for cost optimization

- Establish quarterly retraining cadence

Frequently Asked Questions

How much does it cost to fine-tune a custom LLM?

Fine-tuning costs range from $100 for small models (GPT-4o-mini with 1,000 examples) to $50,000+ for large-scale continued pre-training on billions of tokens. For most enterprise use cases, budget $5,000-15,000 for initial fine-tuning and $2,000-5,000 per quarterly retrain. The ROI typically exceeds 10x within the first quarter for customer-facing applications.

Should I fine-tune an open-source model or a proprietary API model?

If you need data sovereignty, regulatory compliance, or full control over model weights, choose open-source (Llama 3.1, Mistral, Qwen). If you need maximum capability with minimum operational overhead, choose proprietary APIs (OpenAI, Anthropic, Google). For most enterprises starting out, proprietary API fine-tuning is faster and cheaper to operationalize.

How many training examples do I need for enterprise fine-tuning?

The minimum viable dataset is 500-1,000 high-quality, expert-curated examples. Good results typically require 5,000-10,000 examples covering the full range of scenarios your model will encounter. Quality matters more than quantity — 1,000 expert-reviewed examples outperform 50,000 auto-generated ones.

What is the difference between RAG and fine-tuning?

RAG (Retrieval-Augmented Generation) gives the model access to external knowledge at inference time without changing the model itself. Fine-tuning modifies the model's weights to change its behavior, tone, and domain expertise. RAG is best for dynamic, frequently updated information. Fine-tuning is best for behavioral consistency, domain terminology, and output format control. The best enterprise deployments combine both.

Can I use custom LLMs for regulated industries like healthcare and finance?

Yes, but with additional requirements. Use self-hosted models or compliant cloud services (NVIDIA AI Enterprise, Azure OpenAI with data processing agreements). Implement audit logging for all model interactions. Ensure training data is properly anonymized. Work with your compliance team to validate the deployment against industry-specific regulations (HIPAA, SOX, GDPR, OCC guidelines). CallSphere's healthcare voice agent demonstrates this in production — HIPAA-compliant AI with BAA, encrypted PHI handling, and full audit trails across 3 hospital locations.

How does NVIDIA NeMo compare to OpenAI fine-tuning?

NVIDIA NeMo offers more control — you can fine-tune open-source models on your own infrastructure, use advanced techniques like continued pre-training, and deploy with TensorRT-LLM optimization. OpenAI fine-tuning is simpler — upload your data, click train, and use the API. Choose NeMo for data sovereignty, large-scale customization, or self-hosted requirements. Choose OpenAI for speed, simplicity, and when GPT-4o's base capabilities align with your needs.

How often should I retrain my custom LLM?

Retrain quarterly as a baseline. Trigger additional retraining when: (1) new products or policies launch, (2) accuracy metrics drop below threshold, (3) customer feedback indicates outdated responses, or (4) regulatory changes affect your domain. Pair retraining with RAG updates — RAG handles day-to-day knowledge freshness while retraining handles behavioral and terminology updates.

What is the ROI timeline for enterprise custom LLMs?

Most enterprises see positive ROI within 30-60 days for customer-facing applications. The primary savings come from reduced escalation to human agents (56% fewer escalations in our data), higher first-contact resolution (34% → 71% improvement), and lower cost per interaction (90-95% reduction versus human agents). Internal-facing applications (employee knowledge assistants, code generation) typically show ROI in 60-90 days through productivity gains.

Build Your Custom AI With CallSphere

CallSphere's production AI platforms demonstrate the power of custom, domain-specific models at enterprise scale. With 6 live products, 50+ AI agents, and 100+ tools across healthcare, real estate, salon, IT helpdesk, and sales verticals, we build AI that knows your business — not generic chatbots that sound like everyone else's.

Contact CallSphere to discuss how custom AI voice and chat agents can transform your customer interactions, or explore our features to see the multi-agent architecture in action.

#CustomLLMs #EnterpriseAI #FineTuning #RAG #NVIDIA #NeMo #LLMDeployment #AgenticAI #VoiceAI #AIStrategy #CallSphere #MachineLearning

Written by

CallSphere Team

Expert insights on AI voice agents and customer communication automation.

Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.