How Do LLMs Learn New Knowledge? 7 Techniques Every AI Engineer Should Know

How Do LLMs Learn New Knowledge? 7 Techniques Every AI Engineer Should Know

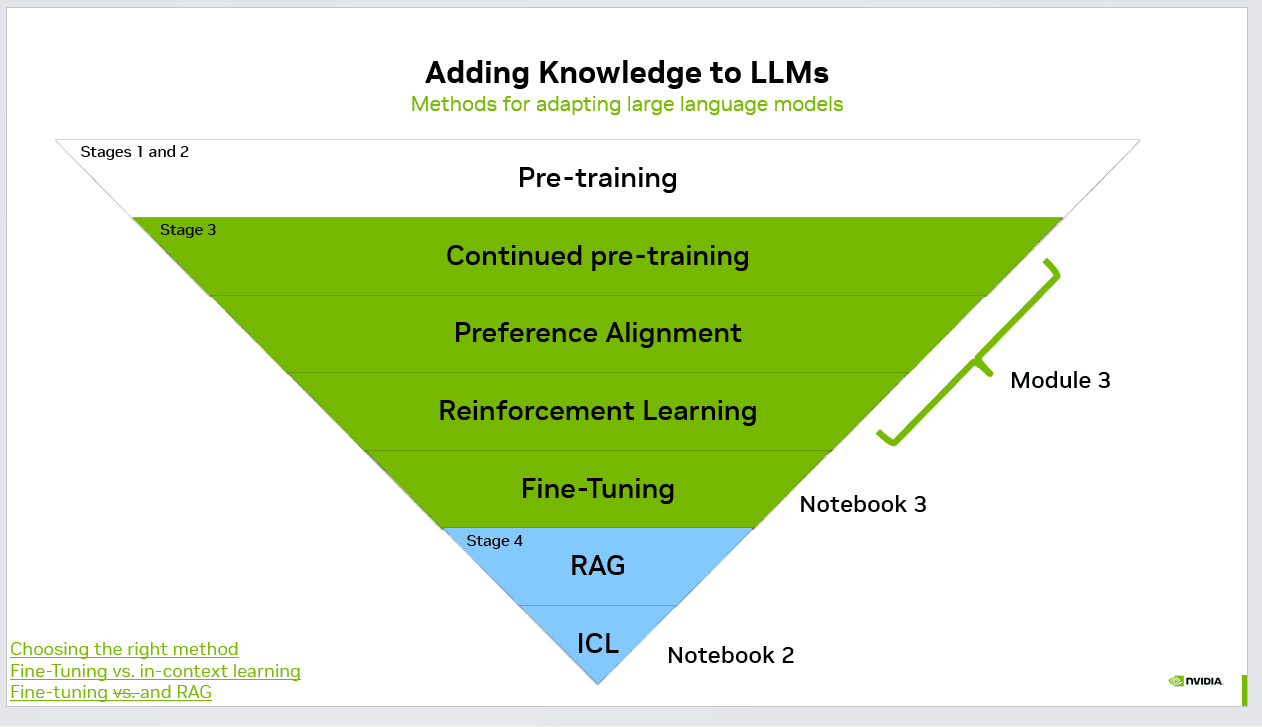

Large Language Models (LLMs) are powerful because they learn patterns from massive datasets. However, real-world applications often require adding new knowledge or adapting the model to specific domains. There are several methods used in the industry to extend or specialize LLM capabilities.

1. Pre-training

Pre-training is the first stage where a model is trained on extremely large datasets such as books, websites, and code repositories. This stage teaches the model language structure, reasoning patterns, and general knowledge.

Pre-training is very expensive and typically performed only by large organizations due to the computational cost.

2. Continued Pre-training

In this stage, an already trained model is further trained on additional datasets that are more domain-specific. For example, a model can be further trained on medical literature or financial documents.

This helps the model adapt to specialized terminology and domain knowledge.

3. Preference Alignment

Preference alignment ensures the model behaves in a helpful and safe way. Human feedback or AI-generated feedback is used to guide the model toward preferred responses.

This stage improves:

Helpfulness

Safety

Tone and behavior

4. Reinforcement Learning

Reinforcement learning (often RLHF – Reinforcement Learning from Human Feedback) optimizes model responses using reward signals.

Instead of simply predicting the next word, the model learns which responses are considered better based on feedback.

5. Fine-Tuning

Fine-tuning trains the model on a smaller curated dataset to specialize it for a particular task such as:

Customer support

Legal document analysis

See AI Voice Agents Handle Real Calls

Book a free demo or calculate how much you can save with AI voice automation.

Code generation

Fine-tuning modifies the model weights and permanently changes the model behavior.

6. Retrieval-Augmented Generation (RAG)

RAG allows the model to retrieve information from external databases or knowledge bases before generating an answer.

Instead of storing all knowledge in the model weights, the model fetches relevant documents in real time.

Advantages:

Knowledge can be updated without retraining

Works well with large knowledge bases

Reduces hallucinations

7. In-Context Learning (ICL)

In-context learning means providing examples directly in the prompt. The model learns the pattern from those examples and generates a response accordingly.

For example, providing a few examples of question-answer pairs can guide the model to produce similar responses.

This method requires no training and works instantly.

Choosing the Right Method

Each method has different trade-offs:

Pre-training: Most powerful but extremely expensive

Continued pre-training: Useful for domain adaptation

Fine-tuning: Good for task specialization

RAG: Best for dynamic knowledge

ICL: Fast and simple

In modern AI systems, these techniques are often combined. For example, a system may use a fine-tuned model together with RAG and prompt engineering to deliver accurate and up-to-date results.

Understanding when to use each approach is critical for building scalable AI applications.

#AI #LLM #ArtificialIntelligence #MachineLearning #RAG #FineTuning #GenAI

Admin

Expert insights on AI voice agents and customer communication automation.

Try CallSphere AI Voice Agents

See how AI voice agents work for your industry. Live demo available -- no signup required.